This April was quite intense. All our engineers are now equipped with a rich set of AI tools, significantly increasing throughput. Internally, we have gone through the AI adoption curve, reached a more or less clear understanding of its capabilities and limitations, and are now working productively as usual – maintaining high software quality while delivering at a higher pace.

The result is pretty interesting – the bottleneck has shifted to human capacity to keep up with everything we are now producing, both externally as new product features and internally through product refactoring. As a team, we have reached our highest throughput so far. Going beyond this level will likely begin to affect team consistency.

Despite a high development pace and a significant amount of internal refactoring, we finished April with 100% uptime.

We implemented a recycle bin for containers and CDNs. Storage entities, used mostly by engineering teams relying on flespi as a cloud storage platform, are now safer to operate and aligned with telematics gateway entities, where deletion is recoverable.

We also introduced an IP whitelist for secure access to files stored on the CDN.

We implemented the ability to upload media files (video, images, tachograph data) to device media storage via REST API. This can be used for migrations from other systems or for pipeline simulation. We are also working on extending device media storage to accept various log files produced by telematics hardware in manufacturer-specific formats. This will improve remote diagnostics and simplify evidence collection for device manufacturer support.

We released TachoBox – a new UI utility for visualizing data parsed by the tacho-file-parse plugin and attached to .ddd files stored in flespi. This is in no way an attempt to replace specialized tachograph tracking services – just a convenient utility built on top of existing flespi functionality.

We also released a number of tacho functionality improvements and are preparing a new version of the Tacho Bridge App with enhanced UI/UX.

We are currently working on a WebRTC video/audio streaming service. Compared to HLS or FLV, WebRTC enables bidirectional audio communication with the device – for example, allowing direct communication between dispatcher and driver via the telematics device, eliminating the need for a phone.

Several new features were implemented in our open-source MQTT Board application, including a convenient flespi topic constructor and various UI/UX improvements.

One of the key internal topics in April was our REST API mutation. Initially, about eight years ago, we designed a read-only device messages calculation method as POST due to its heavy processing nature. Over time, as we introduced more calculation-type API calls (e.g., log calculations), we continued using POST for consistency. However, in 2026, AI changes the context.

When we designed flespi MCP servers with dedicated tools – flespi_api_read (for read-only operations, safe for AI usage) and flespi_api_write (for POST, PUT, PATCH, DELETE methods, which require more control or restrictions) – we encountered a mismatch: our calculation endpoints do not fully align with this safety model.

So we decided to refactor these API methods to make them more efficient for AI agents and reasonably safe for humans behind them. As a result, some read-only POST API calls are now deprecated in favor of GET. You should adopt your integrations and switch to the corresponding GET methods for these endpoints:

Device and logs calculation methods are scheduled for removal on July 1, 2026, along with the corresponding token ACL entries.

Expression testing methods are scheduled for removal on May 21, 2026.

AI API lookup methods are scheduled for removal on May 21, 2026.

One more change, not directly related to AI: in realms, realm/user_home and token_params must be moved into the role.

And of course, quite a number of interesting AI-related updates.

First of all, Codi reached an impressive 98% communication rate. This means that human engineers are now directly handling only about 2% of all support communication. Of that 2%, roughly half are simple confirmations that Codi provided the correct answer, with only a few messages per day actually requiring genuine human expertise.

Jan Bartnitsky recorded a video showing how to provide an AI agent (Claude Code) with MCP tools and skills to access, monitor, diagnose, and manage your flespi account. Following this approach can save you a significant amount of time, even for diagnostics.

Based on Jan’s example, we improved the flespi SKILL.md and strongly recommend reloading it in your AI agent. We enriched it with a number of tricks to enable more efficient use of the flespi API and, by default, removed references to the powerful but slower consult-flespi-account tool (also known as Codi via API). According to our internal MCP evaluations, the current version of the skill instructions is already sufficient for an AI agent to design solid solutions directly within your flespi account, as well as perform deep investigations, health diagnostics, and consistency checks.

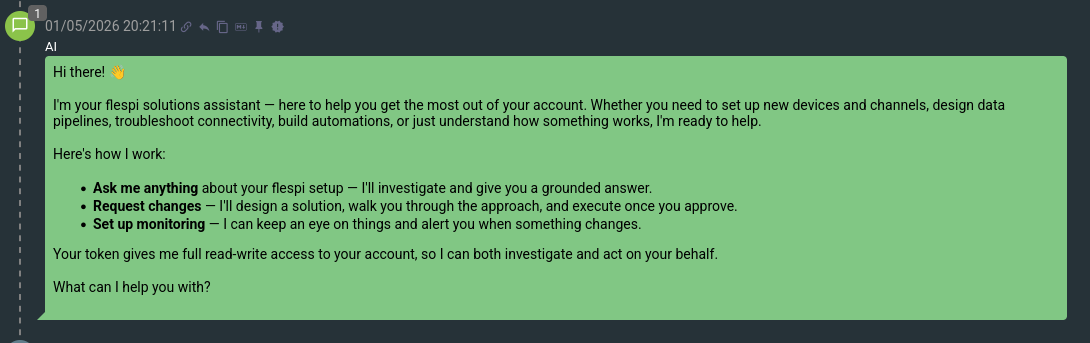

In addition, we decided to apply our existing AI protocol development technology beyond protocol engineering and reuse it for other domains. The result is quite impressive – within just a few days, we built an AI agentic system called flespi concierge, with full API access scoped by a flespi token, capable of consulting, analyzing, monitoring, and improving flespi accounts.

When I tested it on tasks such as realm management and device or channel analysis, I got the feeling that I would not want to go back to manual account management via the flespi.io panel UI. It is smart, proactive, and designed to be safe, protecting the account from unintended actions.

The technology we developed for long-running, complex agentic tasks such as protocol development turned out to be highly effective for non-development use cases as well – and in this context, it feels much more suitable than typical AI agentic systems.

Safety remains at the core of this system. Any potentially dangerous API action is controlled by ACL and can be reviewed by a human or an AI concierge operator before execution. There is no way for the AI to bypass this security layer. This level of safety makes it possible to use such AI agents in enterprise environments as well as for personal flespi account management.

We tested the system using the same types of questions and tasks that users typically ask Codi and obtained comparable results. However, while Codi cannot modify the account or monitor it for specific activity, this system can do both. In some cases, such as diagnosing calculators – where even a human might struggle – the concierge goes further than Codi by proactively executing calculation API calls and exploring different configurations. This is genuinely impressive.

We plan to package this functionality for external use in the coming months and provide an AI concierge service to our users. It will be available both in the UI and via API, allowing you to use stateful AI agents for your own operations. Looking ahead, you may only need a WhatsApp, Telegram, or Slack account to manage and monitor your flespi project, while a flespi concierge agent handles the rest on your behalf.