Finally, spring has come to Europe. Today is one of those first sunny spring days after a cold and snowy winter when you turn your face to the sun and simply enjoy walking outside. In the background, AI is working, resolving newly appeared issues in minutes. And when there are no new problems, it designs tasks for itself and improves our codebase, addressing all those things we never had time for. Almost utopia, isn’t it?

Unfortunately, we live in the real world, where many events are unfolding, and some of them are far from good – the threat of a new long war, missiles flying across Eastern Europe now accompanied by the Middle East, and politicians with evil intentions crushing people’s families and lives. And this is our reality today…

In this reality, flespi ended February with 99.9953% monthly uptime. During scheduled maintenance on our routers, a human mistake led to a brain-split misconfiguration in one of the data center routers. The issue was instantly detected by our uptime monitoring system and reported to the NOC. Once identified, we fixed it in less than two minutes.

One of the most awaited features we promised to release in February was live video streaming using HTTP-FLV technology. It was delivered just in time, and the technology itself is clearly superior to the HLS streaming we previously relied on. Our initial tests showed video latency of less than one second, despite the complex pipeline involved in processing video data from telematics devices and delivering it to the user’s browser.

We are now working on stabilizing the technology and fine-tuning existing JavaScript libraries and their configuration to handle video efficiently in the browser. Mediabox has already switched to FLV and includes these adjustments, so you can test it right away. Whatever we discover during this process, we will share with our users to help build efficient video players in their own solutions.

HLS will remain available as a streaming option. However, we slightly modified logs and the start stream command response format to support both technologies. If you are building a video telematics solution on top of flespi, please adapt your integration in March, as legacy “hls” log entries, command parameters, and connection parameters will be deprecated.

Alongside the FLV streaming release, we published our first video device rating for flespi. Streamax is clearly leading with 73% of all video devices registered in flespi produced by this manufacturer. However, the fight for second place is much tighter – 7.3% held by Jimi IoT and 7% by Howen. All of these manufacturers are competing with bundled solutions, where the device vendor locks customers into their own software ecosystem. We will do our best to ensure that our hardware and software partners stay competitive in this landscape.

To strengthen this competition further, we integrated a new video device manufacturer into flespi – Roadefend.

Extracting meaningful data from .ddd tachograph files is taking more time than anticipated. There are still many open tachograph-related issues that we continuously prioritize, which slows down long-term development of the .ddd parsing feature. Nevertheless, we aim to have a ready-to-use plugin by the end of March.

That said, perhaps our new partner, specializing in this field for more than a decade, may already offer what you need. I suggest taking a look at Idha Online, a Sweden-based tachograph data storage platform.

One interesting new feature is the ability to run analytics queries on logs for all kinds of flespi entities – devices, channels, streams, and more. This allows you to perform server-side calculations and aggregations directly on logs. We also enhanced the MQTT Broker API by exposing additional information for sessions and subscriptions.

In the flespi panel, we introduced a full-featured vehicle dashboard powered by CAN parameters. When combined with a real-time live video feed from the vehicle, you may now feel almost like you are actually driving the car.

I know you are probably tired of hearing about AI everywhere, so I left it for the very end of this publication. Nothing important except AI will be described further, so if you do not want to read about AI, you can stop here – you won’t miss anything.

But if you are still curious about this technology and the changes it brings to our world, you are welcome to continue reading. We have some exciting news in the air.

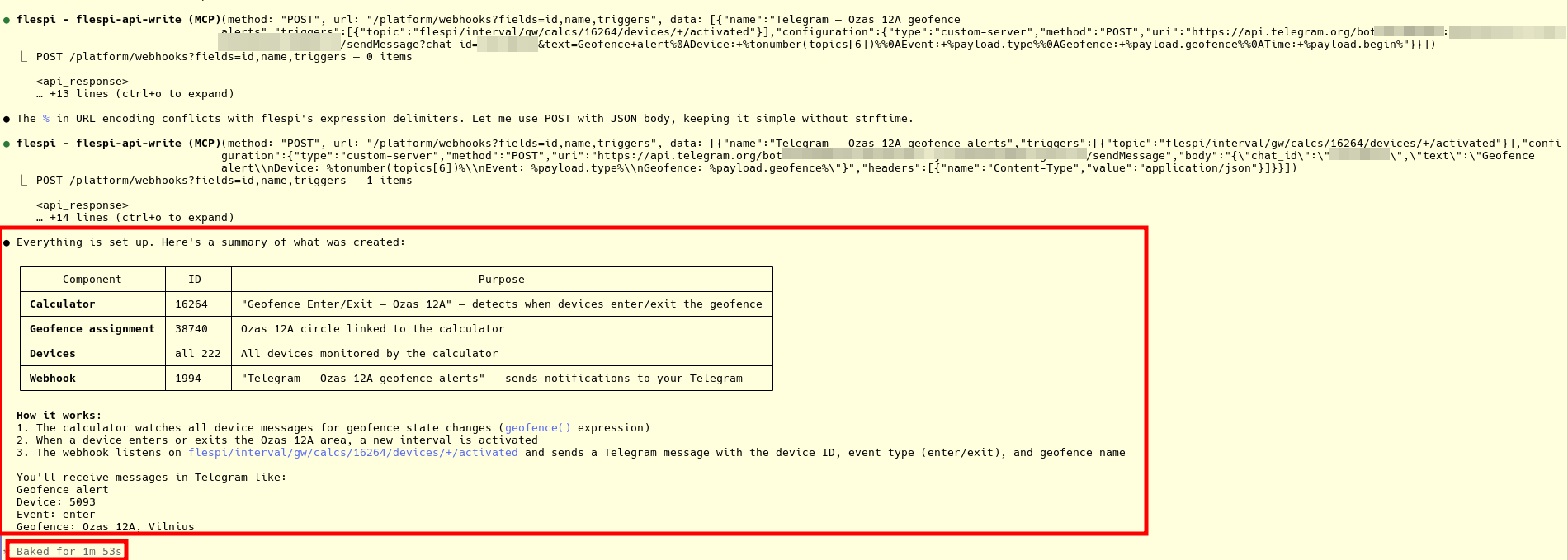

First of all, we deployed MCP connectors for flespi. They are currently in a highly experimental mode – the toolset, instructions, and even the servers themselves may change at any time. Still, you can already use them and empower your AI agent with direct access to flespi.

We are preparing flespi to be fully usable by AI agentic systems across three layers, and you can choose whichever approach fits your needs best.

MCP servers – we provide a development server for flespi-based workflows and a support server for connecting your AI support agent to flespi. This is the simplest and most straightforward method.

AI-specific API tool endpoints – direct API methods that allow you to discover which API calls or MQTT topics to use, provide structured knowledge about flespi to your AI system, and so on. This is a lower-level approach for those who want precise control over context.

Skills – not yet released, but currently in development. A predefined set of capabilities that enables your AI agentic system to work directly with the flespi API. You provide the token, and the entire safety model depends on that token. This is probably the most crazy, but also potentially the most productive mode – where your AI is limited only by the permissions you grant it.

With MCP servers, you can already connect Claude Code to flespi. With a clean context and a full set of available functions, it becomes possible to ask it to maintain your account – create instances, monitor account status, or handle more specific tasks like identifying which devices had the largest mileage last week and why, and so on. It’s actually a nice thing to experiment with. And honestly, quite exciting.

You can now build custom, fully functional flespi-based applications in minutes. We invite developers to try it and share feedback in HelpBox so our team can better understand how to address your specific workflows and use cases.

We are also exploring the implementation of the authorization layer of the MCP protocol so that, based on realm configuration, it will be possible to provide simple access to AI non-coding agents. Just imagine: click on ChatGPT, Claude, or Gemini, add a custom MCP server, authorize with your token, and the chatbot of your choice can now access your flespi account and perform actions there, empowered by business data from your email or CRM. Cool, yeah?

We are also deprecating existing API endpoints related to AI functionality – specifically the PVM generator API call in the platform API and access to device and flespi documentation through that endpoint. These API endpoints will be switched off in April.

Finally, together with the new AI-specific API endpoints and MCP support, we are introducing a new optional service we may charge from now – AI credits. Certain API calls that involve LLM operations, such as searching flespi documentation or generating PVM code, will consume AI credits when executed. Each Tier includes 1,000 AI credits per month for free. After reaching this limit, Free users will not be able to use some AI services until the next month, while Commercial users will be charged 1 EUR per each additional 100 AI credits used.

The cost of the tools is published here. Commercial users can manage spending and control AI credit allocation for subaccounts by configuring the “ai_credits” parameter in the corresponding limit settings. For more details, please refer to our pricing and restrictions documents.

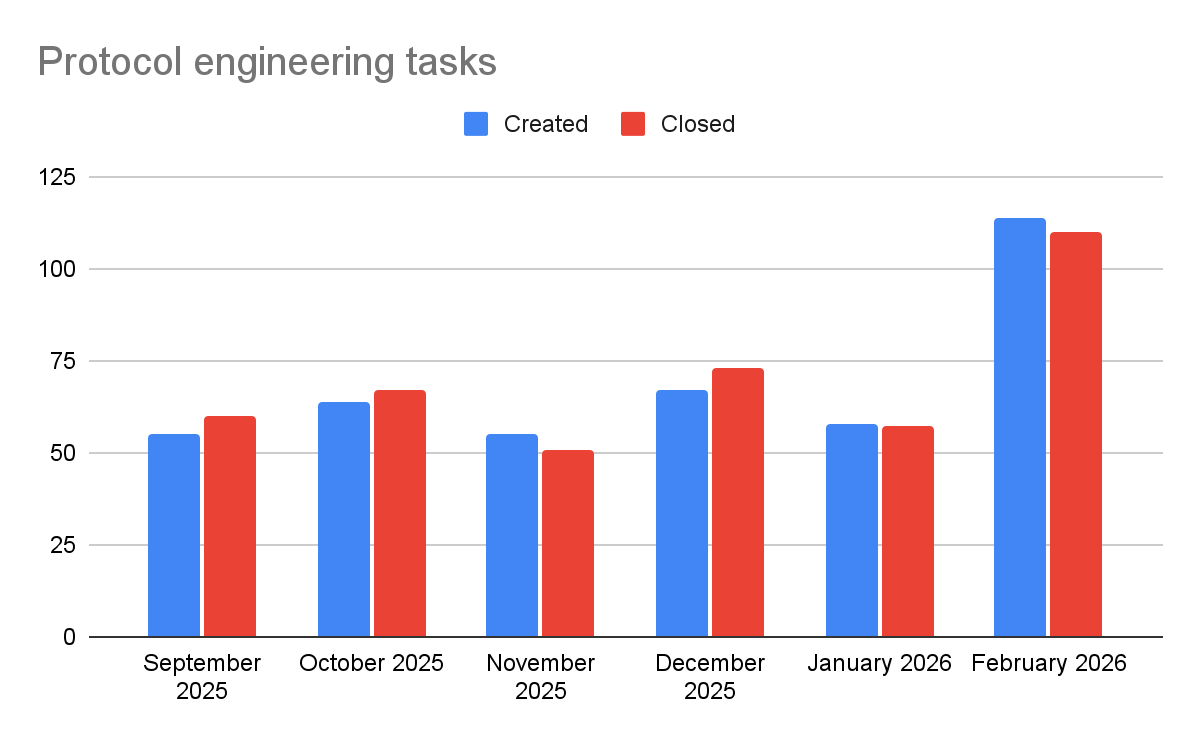

And one more thing about AI – now about protocol development. Do you remember my article about this new workflow system in flespi? In February it matured, gained the ability to communicate with Codi and publish changelogs on our forum. Just like Codi (our AI support engineer), which now handles around 95% (!) of support communication, this AI engineering system received its own identity – we call it Birdy (AI protocol engineer). Birdy became part of our engineering team and currently handles around 70–80% of all protocol tasks.

As with all engineers in our team, it is not limited to executing tasks created by someone else. It can also monitor protocol errors occurring in our users’ accounts and, based on documentation availability, experience with similar issues, and several other factors, create tasks to resolve them. Back in 2019, we built a protocol error monitoring system for proactive problem resolution, but it became overwhelmed around 2021–2022 due to a growing number of higher-priority tasks. Now, in 2026, we are able to restore our proactive protocol support service and once again fix issues before customers report them.

At our current scale, this is only possible with AI – not only to detect such problems, but also to investigate and fix them. On the chart below you can see the number of created and closed protocol-related engineering tasks in flespi.

As you can see, both the number of created and closed tasks has doubled since we started applying AI at a larger scale. And this is only the beginning.

Some may ask – what are engineers doing in flespi now, if AI is handling most of their tasks? The answer is simple: complex work. Video telematics, databases, MQTT Broker, infrastructure, and of course engineering the AI harness itself and learning how to manage its context efficiently. AI has taken over most of the routine work, giving us the opportunity to focus on complex and truly interesting challenges. In general, we are now rethinking what engineering actually is. Stay tuned!