Ordinary life

flespi team consists mostly of engineers. And if you know good engineers, most of the time they retreat into themselves, dive into the problem resolution and do not talk too much compared to the sales guys who chat a lot and have little chance to concentrate on a specific task for long.

We, at flespi, try to find a balance between the two. We develop things, chat with our users and now have a forum to communicate even more. Also, each Friday we have a so-called flespi dive-in — a special 2-3-hour meeting where we discuss the results and problems of the past week and put together a todo list for the next one. And of course, most of the time we are developing new stuff.

Something special

But one week this year was very special. We decided to talk the whole week about the future of flespi, about its architecture and, finally, about the new servers location that we have in Russia and how to seamlessly connect data centers in Russia and the Netherlands both operating autonomously but at the same time providing our users with the feeling that they are working with big and cool cloud.

Also to the list we’ve added a lot of other minor points we needed to discuss. We named this event “Recharge Local”, scheduled it for September 9-13, and the show began.

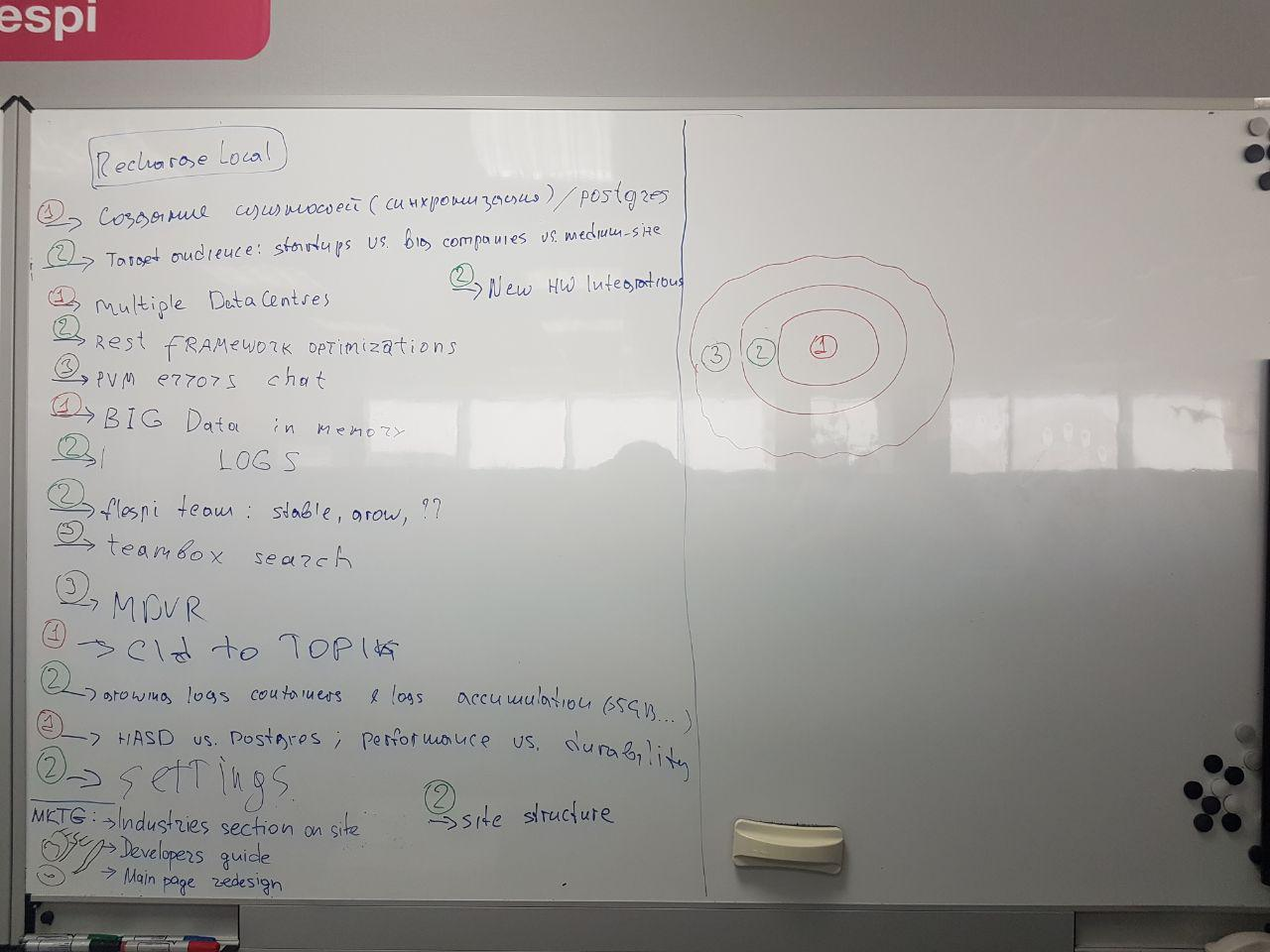

The initial list of questions already prioritized looked like this:

On the first day, we agreed on the way we are working, who and how would do users support when the team was busy at a whole-day meeting and emergency actions we needed just in case. We even decided to spend the second day at a BBQ party outside the office where the warm autumn sun and fresh air would help us jump out of the routine.

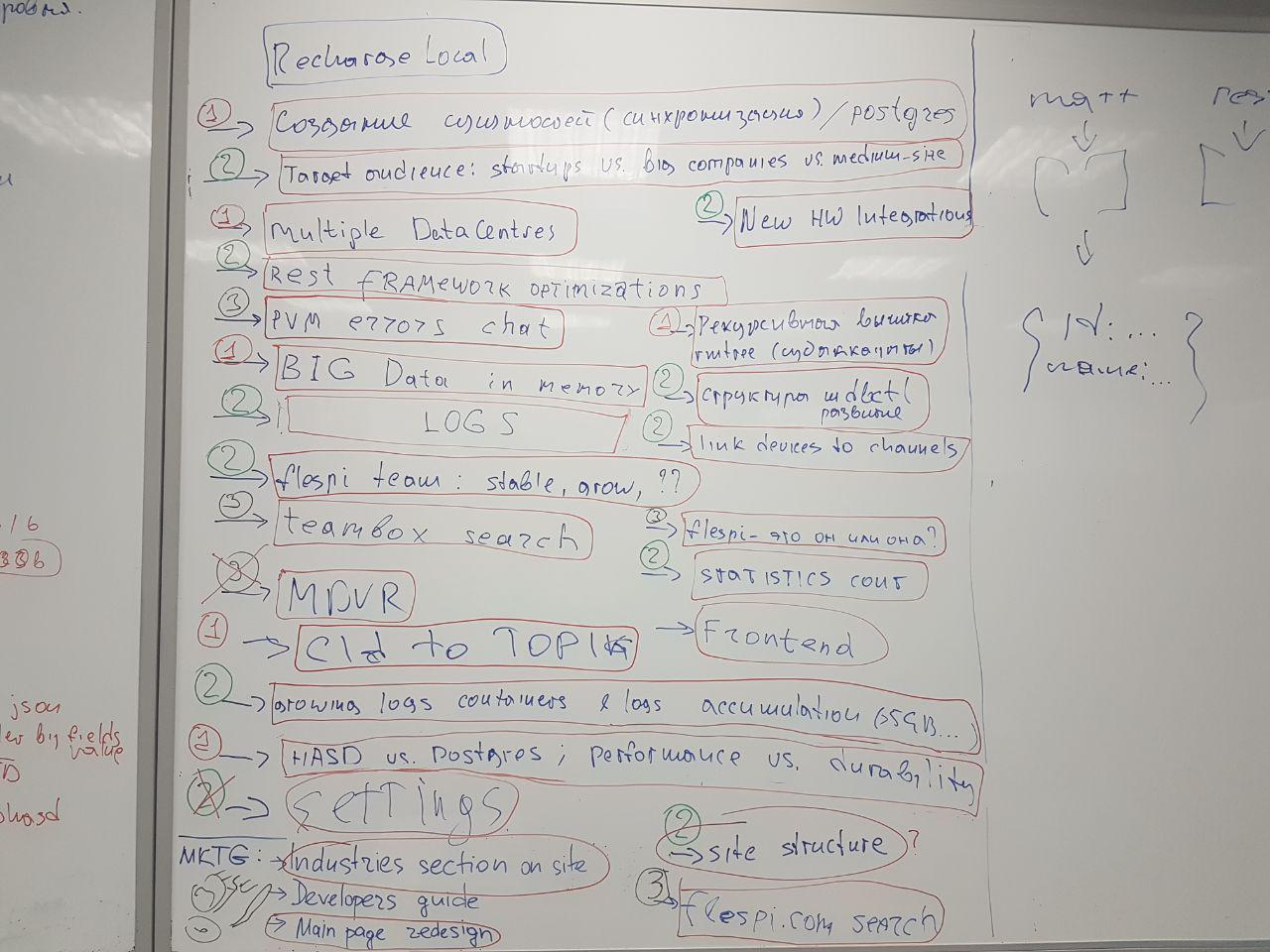

Actually this format worked. The environment was favorable for us — even our users weren’t disturbing us a lot, mostly writing HelpBox messages in the evenings. We boxed the resolved questions with a red highlighter and ended up with the following picture:

Outcomes

I won’t bother you with all the details of our discussions — there were questions that were resolved in 2 minutes but also there were questions that took days to resolve.

For example, the final resolution on how to operate flespi in multiple datacenters was stabilized only on Thursday morning — i.e. the discussion took three days. We decided that we are not going the same way as AWS, Leaseweb, OVH and other companies that make alternative locations completely separated from each other. This will be our plan B. But first, we will try to create a global cloud with embedded autonomous behavior providing our users with a seamless way to operate multiple locations. From flespi user perspective the cloud concept will look like this:

We will have regions. Each region can be served by multiple datacenters. User operates only with regions and we can move its data between datacenters that belong to one region. The current region will have the name eu and additional region in Russia will have the name ru.

Flespi users can seamlessly operate with central point flespi.io or mqtt.flespi.io that will redirect them to the correct region whenever needed. These names are aliases for the default eu region.

At the same time, there are local regional access points prefixed with the region name: eu.flespi.io for REST and eu-mqtt.flespi.io for MQTT in eu region and the corresponding ru.flespi.io and ru-mqtt.flespi.io for ru region. These names can be used whenever a user wants to connect to a specific region directly.

A region can be selected only when creating a first-level subaccount (from root account) and later can not be changed. It means that only the root account can contain subaccounts located in multiple regions.

All further flespi entities — channels, devices, streams, and calculators are created in the same region as their account.

Another important question we answered is where and how to store state data (plenty of data on our volumes) and how to access it. We are currently storing state information in the Postgres database. After many months of testing the HASD concept, where we store a part of frequently changing state data, we decided to finally move all state information into HASD leaving just a few small static tables in Postgres. One of the key points in making this decision was the performance and flexibility of HASD — we are the authors of everything under the hood and can solve any problems and do all the necessary changes at our discretion. Once we hit the hardware limits like CPU, RAM or network capacity, we will need this knowledge a lot. By the way, the GPS-Trace platform, based on flespi as a backend, uses HASD as a primary state storage system. And these guys are doing great things.

We’ll leave other resolutions internal even though we are generally very open. It’s like for men and women to stay attractive they need to have some small secrets and reveal them one by time. Same here, I will tell you about other ideas a bit later. For example, is flespi he or she, what kind of users we are focusing on, what new features you should expect from us soon and so on.

This was a wonderful week. We were talking days on end and now we are longing to start working hard to implement the resolutions we generated — it will take months but we have a goal and now we know the shortest way!